About

Android, Linux, FLOSS etc.

Code

My code

Subscribe

Subscribe to a syndicated RSS feed of my blog.

Sat, 23 Dec 2017

So on February 13th I bought a Pixel phone as well as a Daydream VR headset. I set up the Android Studio "Treasure Hunt" sample, and modified it slightly to change the controller behavior and other things. This was all rather new, and there was not a lot out for it. I went into the Daydream VR store and downloaded some free games (and one paid one) and saw almost all of them were made from Unity. I saw a lot was involved making VR from scratch in Android Studio, and that it was also relegated to just the Daydream VR headset, so I put it aside and worked on other things.

For most of this year I have only had an Ubuntu Linux laptop and desktop. I read several months ago that Unity had a Linux beta, but then read that it did not export scenes to Google Daydream VR. However, I have had a Mac notebook on loan since October, and I knew Mac could export to Google Daydream VR.

So on December 10th, I downloaded Unity to Linux and began looking at it. Unity has a tutorial called "roll a ball" where you make a game that rolls a ball around, picking up spinning cubes. While making the game, you're learning about the various aspects of Unity, writing short C# scripts and so forth. I finished that, and then had a nice little game. Once nice part is it was exportable to a number of OS's - Android, iOS, Linux, Mac etc. I played the game on my Linux laptop, and then played it on my Android.

Then I looked at the Google Daydream VR sample on Unity Linux. It downloaded, and I could edit the scene and preview it on Linux. Then I tried to export it to Android VR. No go. Well, Unity had warned me before I downloaded it, but I have it a try.

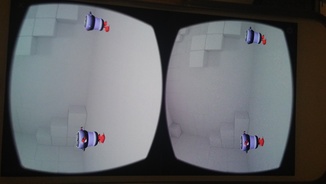

So I pulled out the Macbook I have on loan, downloaded Unity, downloaded the Google VR scene, and sent it to my Pixel. I put my Daydream VR headset on and, bam, I am in the scene I just compiled

I do some minor modifications, and they pop up in the scene. I program Unity, put the headset on, am in the world I just made, want to make a change, pop the headset off, back at the keyboard, put the headset on again and am in the changed scene. Very cool

At the local Android Developer Meetups is a fellow named Dario who works for HTC. He has been working with VR a lot. He thinks the interesting thing will be the building you can do within VR. One example I have seen of this is Medium, where you are molding a form together with your controller. In school I learned that one definition of an embedded system was a system that could not program itself. If you could change the world you were in from within using Daydream, Oculus, Vive etc., the scene would not be embedded.

In the XScreenSaver source code is a DXF file to build a robot, I popped it into Unity. It was way too big for the base Unity Daydream sample app scene. So I scaled it down a bit. Better.

But it was all one color. So I looked at the winduprobot.c to see what was being sent to glColor3f for various robot parts. I dropped them in as materials and now the robot was colored properly.

But the DXF was only half a robot. So I looked in winduprobot.c again and mirrored or otherwise convoluted various parts so that the body inside, body outside, leg, and arm-part would be mirrored on both sides.

So that is where I am now with it

It is pretty cool to be able to drop 3d models into the world, write little C# (or Javascript) programs for the world and so forth and have it all pop up, and to be in that world.

In the future I might look into Godot Engine which is getting some AR/VR support, or look back on the Android Studio VR modules, or into other things. Unity is a good, easy base to survey these things from though.

Wed, 28 Dec 2016

So having tried out the HTC Vive two weeks ago, I decided to go to Best Buy and give the Oculus Rift a try.

The Microsoft Store had more of an area set out for the Gear demo, the Rift area was smaller and not partitioned off. The demo guy worked for neither Best Buy nor Facebook/Oculus, but for a third party - but was more connected to Facebook/Oculus than Best Buy.

The setup had the Rift, a sensor, Rift headphones, and the new Oculus Touch controls. I put on the headset and then the touch controls. Like the Vive, you can see your virtual hands in front of you. You begin in something like a hotel lobby which is a waiting area of sorts. Then you're put on the edge of a skyscraper, and can look over the edge. I definitely had some visceral feeling of vertigo doing that. You're also put in a museum with a rampaging dinosaur, which looked real enough. You also get to watch two little towns operate. You also meet an alien. I believe this is the "dreamdeck" demo, although I don't recall seeing robots.

Then you're in an empty VR room and you learn how to use the touch controllers. Then you can choose what app to use - I chose Medium, a sculpting app. It's cool, you choose if you're left or right handed. Then the left hand does things like undo the last thing you did with your right hand. You get to sculpt a 3d tree. Your right hand keeps transforming from one tool to another - first you place your tree upright, then add branches, then add more bulk to the trunk, then sculpt down the branches, then add leaves etc. 3D sculpting. Your right hand transforms from one tool to another depending on the job.

In my experience, the Vive felt more like 3d, the Rift felt a little more like I was looking at two screens. Although as I adjusted the headset it felt less like that - I'm not sure if that was the Rift or me just not tightening it properly.

One nice thing about the Vive demo was some of it was a little less guided - I could move around and manipulate what I wanted. The Oculus demo was guided each step.

Still it was pretty awesome. This is just the first generation, they'll get better and cheaper as time goes on. VR is obviously already here for early adopters, with killer apps and cheaper and better hardware it will take over the video game market.

Thu, 15 Dec 2016

So today I went down to the Roosevelt Field shopping mall. I saw Microsoft had a store there, and often I would just walk by, but I decided to see what they had there.

Among the various devices were boxes with Oculus Rifts and HTC Vives in them. They even had the HTC Vive set up for a demo. I have of course been hearing a lot about VR since the Oculus Rift Kickstarter kicked off in 2012 (actually I've been hearing about it before that even). I've never tried the Vive or Rift though. Actually the Rift's hand controllers, Oculus Touch, just came out last week, so I'm not all that late in this.

It was quite amazing. There's been a number of times in my life that I have seen a new piece of technology - a PC, a modem, a Unix box on the Internet, a web browser - and it was immediately obvious how impactful this technology would be on the world. VR in the Vive was one of those experiences. Seeing it you can foresee the massive changes this new piece of technology will engender.

One thing a lot of people who have seen this have said is you have to see it to understand. You can explain it to people - but people won't really have an understanding of it until they use it. Because it is so visceral. It definitely has the "presence" within the immersion that people talk about.

I didn't realize how interactive it was. I walked around, I was under the ocean looking at fish, whales and a sunken ship, I picked up objects, I picked up mallets and played Mary Had A Little Lamb on a xylophone in a wizard's workshop, which then played over the store's speakers.

When I took the headset off after a few minutes I experienced what some have discussed. It was slightly disorienting. My central nervous system said - how did you get from the bottom of the ocean to wandering around this mall so quickly? It's a signal of just how this relatively inexpensive and relatively portable system really has finally got immersion presence right.